5 minutes read

How do language-based AIs, such as GPT, work?

Table of contents

- → Introduction

- → What does GPT stand for?

- → How does GPT work?

- → How can GPT feel so smart?

- → Why is GPT often referred to as a “model?”

- → Is GPT smart enough to be sentient?

- → What’s the difference between GPT and ChatGPT?

- → Who owns GPT?

- → Can I download ChatGPT and try it for free?

- → Could GPT dominate humanity at some point?

- → Learn how to build a simple language model

Introduction

This article aims at demystifying GPT in the simplest way possible and give you a high-level understanding of large language models (or LLMs), which are often referred as “Artificial Intelligence.”

That way, you will be able to use them more effectively in your next project or when “chatting” with them (through ChatGPT for instance).

What does GPT stand for?

GPT stands for Generative Pre-trained Transformers.

This article will tell you everything you need to know about why this Artificial Intelligence is generative, why it’s been pre-trained, and what is a Transformer, in the easiest way I possibly can.

How does GPT work?

Here’s a simplified way to see it: At the root of GPT is a word prediction algorithm (named “Transformer“) that is based on the patterns it notices from its training data.

All it sees are numbers. They’re also called “tokens.” They’re the numerical representation of the words that fed its knowledge base.

How can GPT feel so smart?

GPT has been trained with such an astronomical amount of data that, at some point, it started to show signs of (artificial) intelligence.

Imagine something that’s been forced to digest a huge amount of knowledge and can’t help but become smarter thanks to it.

However, don’t try to think of it as a human being or even a particularly smart animal. You’ll have a hard time making sense of this.

GPT won’t learn anything else beyond its training data. It doesn’t work like our brains. It’s just a next word prediction algorithm based on a statistical model of human languages.

Why is GPT often referred to as a “model?”

GPT is called a model because it has been trained to model the statistical patterns of language.

More specifically, it has learned to predict the probability of a word given the previous one in a sentence, which allows it to generate text that is similar to what humans do. This is why the amount and quality of training data determines how smart it can be.

Is GPT smart enough to be sentient?

GPT is just a language model that gives the illusion of intelligence. It’s nowhere near an artificial being. Nowadays, we still don’t have a precise scientific definition of consciousness.

What’s the difference between GPT and ChatGPT?

ChatGPT is just a web application that wraps the GPT model into a user-friendly interface so anybody can interact with it.

Who owns GPT?

GPT is owned by OpenAI.

When I discovered the company, I was surprised to learn that OpenAI began as a non-profit.

It was founded in December 2015, with the goal of promoting and developing friendly AI in a way that benefits humanity as a whole.

It has since transitioned to a capped-profit model and has made significant strides in AI research and development, notably creating advanced AI language models like GPT-3 and GPT-4, and engaging in various AI-related partnerships and applications. (The most notable being Microsoft that integrated GPT into Bing.)

Can I download ChatGPT and try it for free?

Yes, you can download ChatGPT’s applications for free on iOS, iPadOS, and Android.

You can also use ChatGPT on any platform via its website.

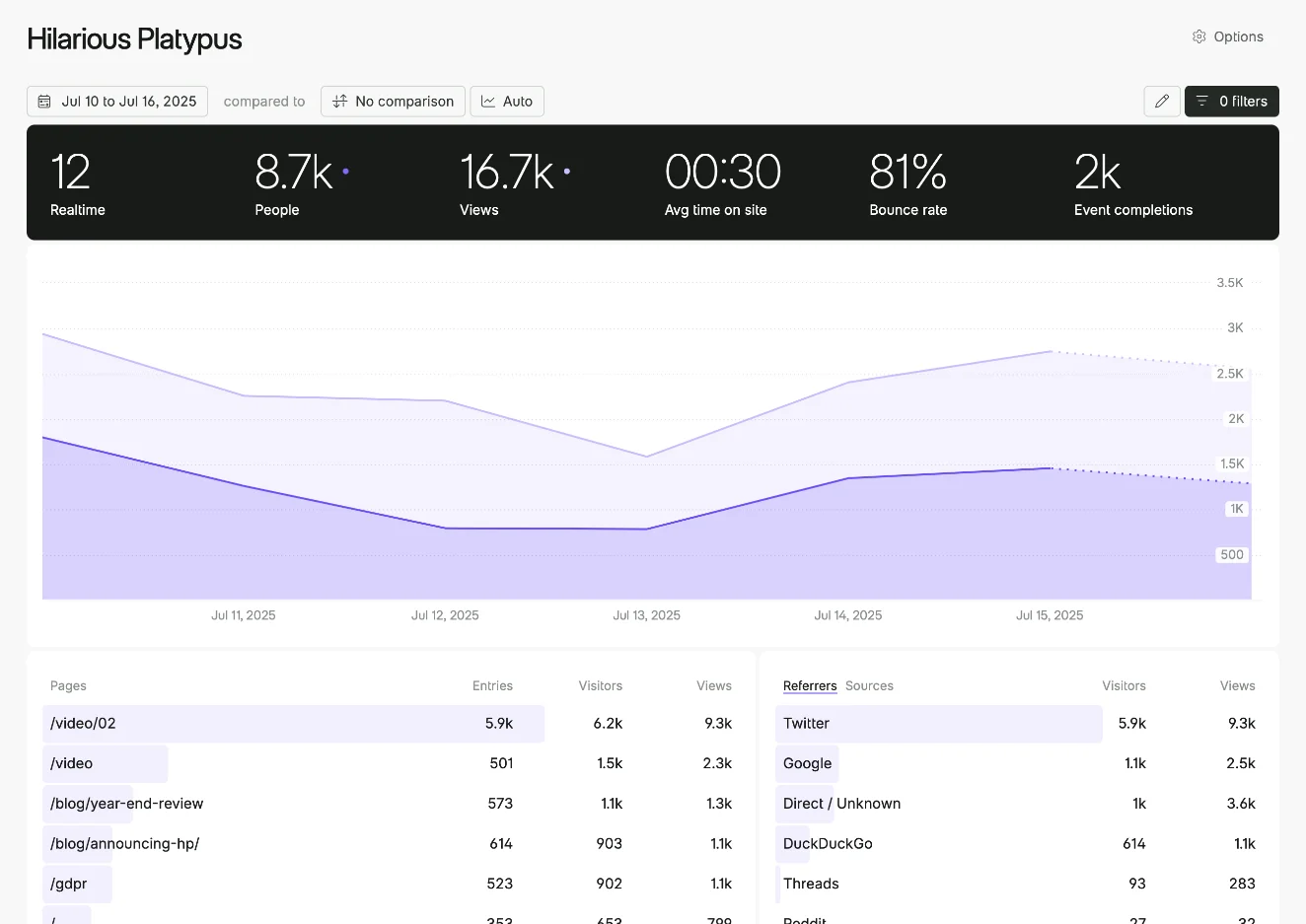

And by the way, I created a free tool that generates YouTube video transcripts and helps you summarize them using ChatGPT. If you needed inspiration, that’s a good starting point to leverage GPT’s power!

Could GPT dominate humanity at some point?

AI could change humanity for the better.

But some people could use it for harmful matters.

Experts even say it’s possible that AI could try to control humanity at some point, even if we can’t imagine its motives yet.

Currently, though, GPT is light years away from being able to do that.

Learn how to build a simple language model

Andrej Karpathy, one of OpenAI’s co-founders, created an incredible video showing how to build a simplified version of GPT.

This tiny GPT predicts the next character instead of the next word and is based on a small dataset. That makes everything less computationally intensive, and you can focus on learning.

https://www.youtube.com/watch?v=kCc8FmEb1nY

Are you a developer? If so, are you ready to leverage GPT in your applications?

Then, check out my articles on this matter:

- Start using GPT-4 Turbo’s API in 5 minutes

- Start using GPT-3.5 Turbo’s API in 5 minutes

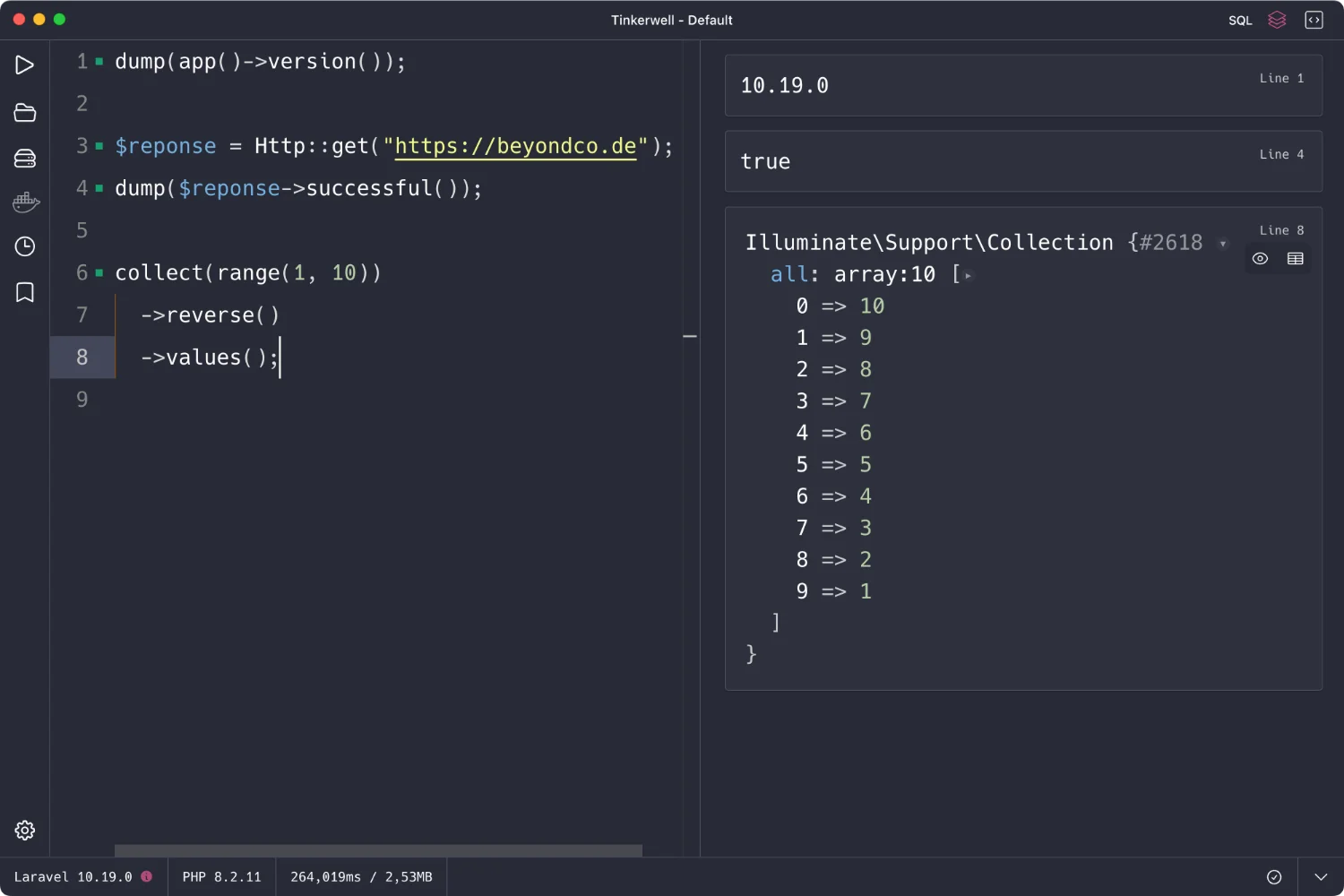

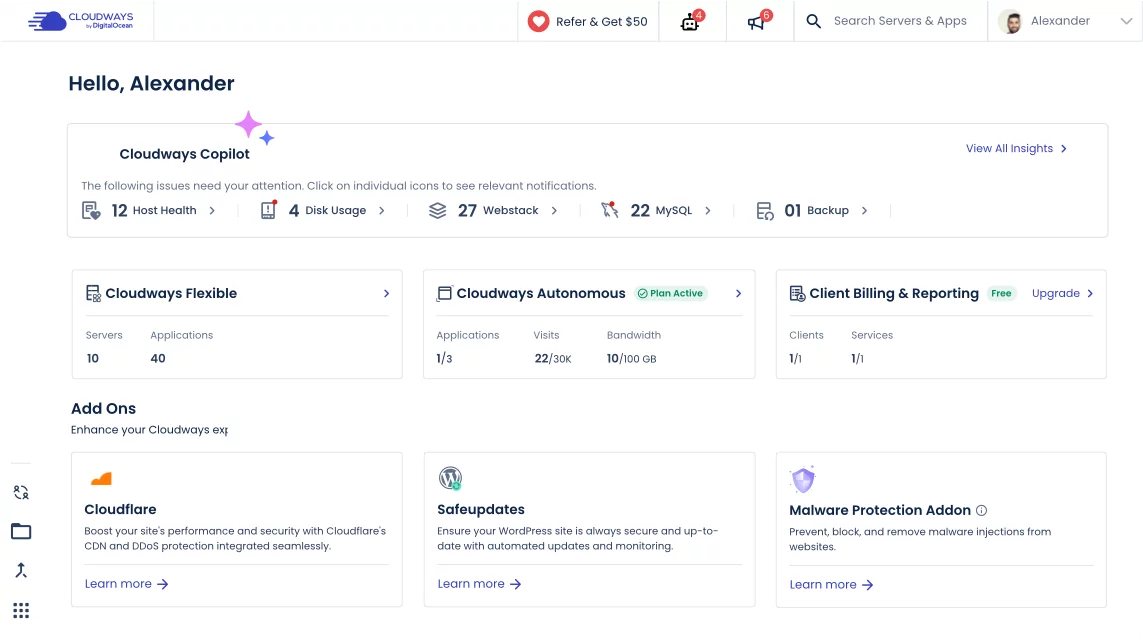

- Use PHP to leverage OpenAI’s API and GPT effortlessly

Did you like this article? Then, keep learning:

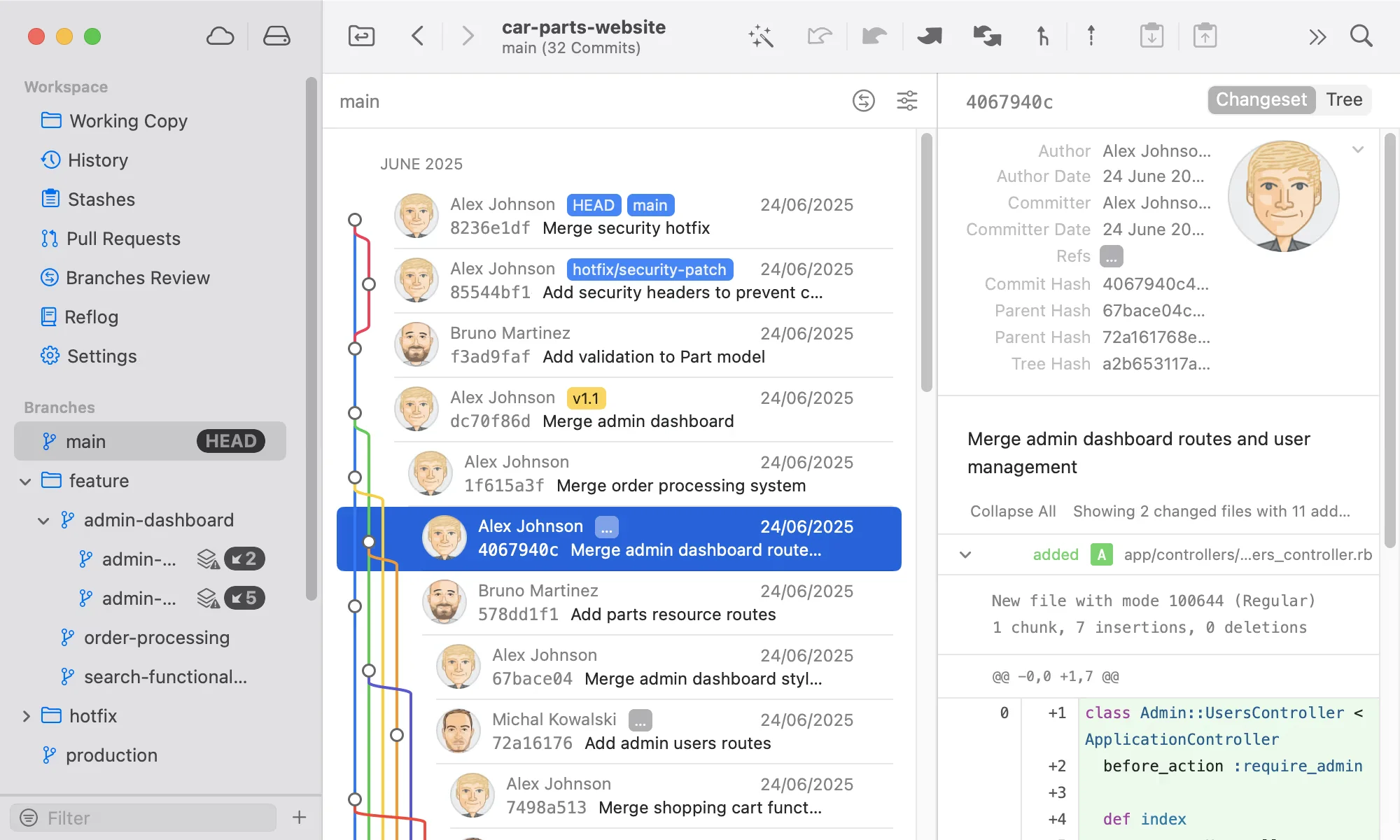

- Learn to build a ChatGPT plugin using Laravel to extend your app

- Get tips on rapidly generating Laravel Factories with ChatGPT assistance

- Learn how to leverage GPT APIs quickly for practical projects

- Step-by-step guide to using the latest GPT-4 Turbo API effectively

- Explore an easy-to-follow video tutorial to build a simple GPT model yourself

- Discover how PHP integrates with OpenAI's GPT for powerful functionality

0 comments